Research

We aim to capture the best intelligence possible for robots. To achieve this goal, we focus on two main directions in this field:

|

An autonomous robot is an integrated system capable of performing a series of operations to their surroundings. These operations are often determined by reference to the external environment that is perceived and measured within the robot itself. A unified autonomous pipeline should provide certain level of Secured automation to Perception, Cognition, and Control. This may include research topics of sensor fusion, SLAMs, local/global planning, collision avoidance and their machine learning acceleration. |

|

Future robots may have the ability of intersection to learn from their intrinsic capacity and the features of the surroundings. This is supposed to help the robots achieve adapted solutions and skills for real-world tasks like navigation, and grasping. Those tasks are always complex for robots and hard to model by rules. A data-driven pipeline is proposed to discover the relationship between robots to the environment, and establish a pool of transferable skills of robots to intervene with real-world subjects. |

|

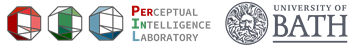

We are living in a highly dynamic and uncontrolled surrounding. Understanding such a scene may involve information capture and analysis at Geometric and Semantic levels. The former focuses on the extraction of geometric entities/primitives from a scene, and consider the interactions between them. The latter is often to learn human-understandable clues behind sets of geometric primitives. Correctly understanding a scene is an essential ability for autonomous robots when exploring the real-world. |

If you’ve found these topics interested and would like to work with us, please see our current Vacancies and [Get In Touch].